Бізнес

Тренди

Your Company Doesn’t Have an AI Strategy. It Has API Dependency

Your Company Doesn’t Have an AI Strategy. It Has API Dependency

Feb 18, 2026

In 2023, integrating generative AI meant experimentation. By 2024, it meant copilots embedded in productivity tools. By 2025, it meant large-scale deployment of external model APIs across customer communication, internal automation, analytics, and workflow orchestration. By early 2026, a structural reality has emerged: many enterprises that describe their approach as an “AI strategy” are, in fact, structurally dependent on a small number of external model providers for core operational reasoning. That is not strategic autonomy. It is infrastructural reliance.

The difference is not semantic. It defines control.

From Cloud Lock-In to Model Lock-In

Enterprise IT has navigated concentration risk before. Between 2010 and 2020, public cloud adoption consolidated heavily around Amazon Web Services, Microsoft Azure, and Google Cloud. According to Synergy Research Group, by 2025 these three hyperscalers controlled roughly two-thirds of global cloud infrastructure revenue. Enterprises responded with multi-cloud strategies, redundancy planning, and sovereign data architectures. Even so, cloud migrations required years of architectural adaptation.

AI has moved through consolidation faster.

By Q4 2025, IDC estimated that more than 70% of mid-to-large enterprises using generative AI relied primarily on third-party model APIs rather than self-hosted or proprietary models. In practical terms, that means reasoning—the cognitive core of digital workflows—was outsourced to vendors such as OpenAI, Anthropic, Google DeepMind, and, in specific regions, Baidu or Alibaba Cloud.

Cloud providers sell compute capacity. Model providers sell inference and reasoning. The latter is harder to replicate and more difficult to substitute.

Training frontier-scale models in 2025 required capital expenditures measured in billions of dollars. OpenAI’s GPT-4 training was estimated by independent analysts to cost in excess of $100 million in compute alone. Google DeepMind’s Gemini training cycles involved comparable infrastructure investments. Even fine-tuning and hosting open-weight models at enterprise scale require GPU clusters that few organizations maintain internally.

As a result, dependency shifts from infrastructure tooling to cognitive infrastructure.

The Pricing Reality: Volatility at Scale

API dependency is not theoretical. It is measurable in cost structures.

In late 2025, several large model vendors adjusted pricing tiers for high-volume inference customers. While official statements framed changes as optimization of resource allocation, enterprise clients operating high-frequency AI workloads reported effective cost increases ranging between 20% and 35% in specific usage brackets, particularly in multilingual and long-context deployments.

For organizations using AI in voice-based customer communication, token consumption scales rapidly. A customer support center handling 500,000 voice interactions per month—each involving real-time transcription, contextual reasoning, and response generation—may consume tens of millions of tokens daily. A marginal increase in token pricing directly translates into six- or seven-figure annual cost adjustments.

Unlike SaaS pricing, which is typically predictable within annual contracts, LLM API pricing structures remain dynamic. Rate limits, context window pricing, and premium feature tiers evolve. Enterprises often adapt post-factum.

This is not budgetary variance. It is structural exposure.

Latency and Degradation: Operational Risk at the Edge

Beyond pricing, performance variability introduces risk.

In January 2026, several European enterprises reported temporary inference latency spikes when routing API calls to external model providers during peak demand windows. While these were not full outages, response times exceeding 1.5 seconds were observed in certain geographies. In conversational systems—particularly voice AI where natural interaction requires sub-500 millisecond responsiveness—latency degradation has immediate business impact.

According to internal analytics shared by a large European e-commerce platform during an industry roundtable in February 2026, a conversational latency increase from 400 milliseconds to 1.2 seconds correlated with a 9–12% drop in session continuation rates. In high-volume customer communication environments, this directly affects conversion and retention.

When AI mediates customer communication, degradation equals lost revenue.

Enterprises frequently architect orchestration layers assuming consistent inference performance. Few implement robust multi-provider failover logic with identical behavioral guarantees. In practice, switching providers during latency events requires prompt recalibration and regression testing—processes that are not instantaneous.

This is not a tooling inconvenience. It is a real-time operational dependency.

Model Drift and Behavioral Variability

Another under-discussed dimension of API dependency is behavioral drift.

Frontier model vendors regularly release updates to improve safety, reasoning coherence, multilingual support, or instruction adherence. These updates are typically beneficial. However, subtle shifts in output structure or classification reliability can cascade through enterprise automation pipelines.

In early 2026, a multinational retail group attempting to optimize costs migrated part of its AI workload from one provider to another. The transition required over twelve weeks of prompt re-engineering and workflow recalibration due to differences in response formatting and edge-case handling. Intent classification variance in multilingual contexts required retraining internal validation datasets.

Model interchangeability is conceptually attractive. Operationally, it is expensive.

Unlike databases or containerized workloads, reasoning systems embed probabilistic behavior that influences downstream logic. A minor shift in classification confidence thresholds may alter escalation flows or trigger incorrect workflow routing.

Most enterprises budget for infrastructure redundancy. Few budget for continuous model behavior revalidation.

Regulatory and Sovereignty Exposure

AI dependency is also geopolitical.

In 2025 and early 2026, enforcement mechanisms around the European Union AI Act intensified, particularly regarding high-risk systems and transparency requirements. Simultaneously, data localization frameworks expanded in parts of Asia-Pacific and the Middle East. For enterprises operating across jurisdictions, model selection now intersects with regulatory compliance.

If a company relies exclusively on an external LLM API hosted in limited geographic regions, changes in cross-border inference policies may require architectural restructuring. Cloud compliance strategies addressed data residency. AI compliance must address reasoning residency—where inference occurs and under what legal frameworks.

In regulated sectors such as financial services, audit continuity becomes more complex when model vendors update architectures without granular transparency. If reasoning logic shifts and documentation generation pipelines are built on external APIs, compliance teams must revalidate outputs without access to underlying weights or training data.

This creates a governance asymmetry.

Enterprises embed AI in revenue-generating and compliance-critical workflows. Model vendors retain unilateral update authority.

The Architecture Illusion

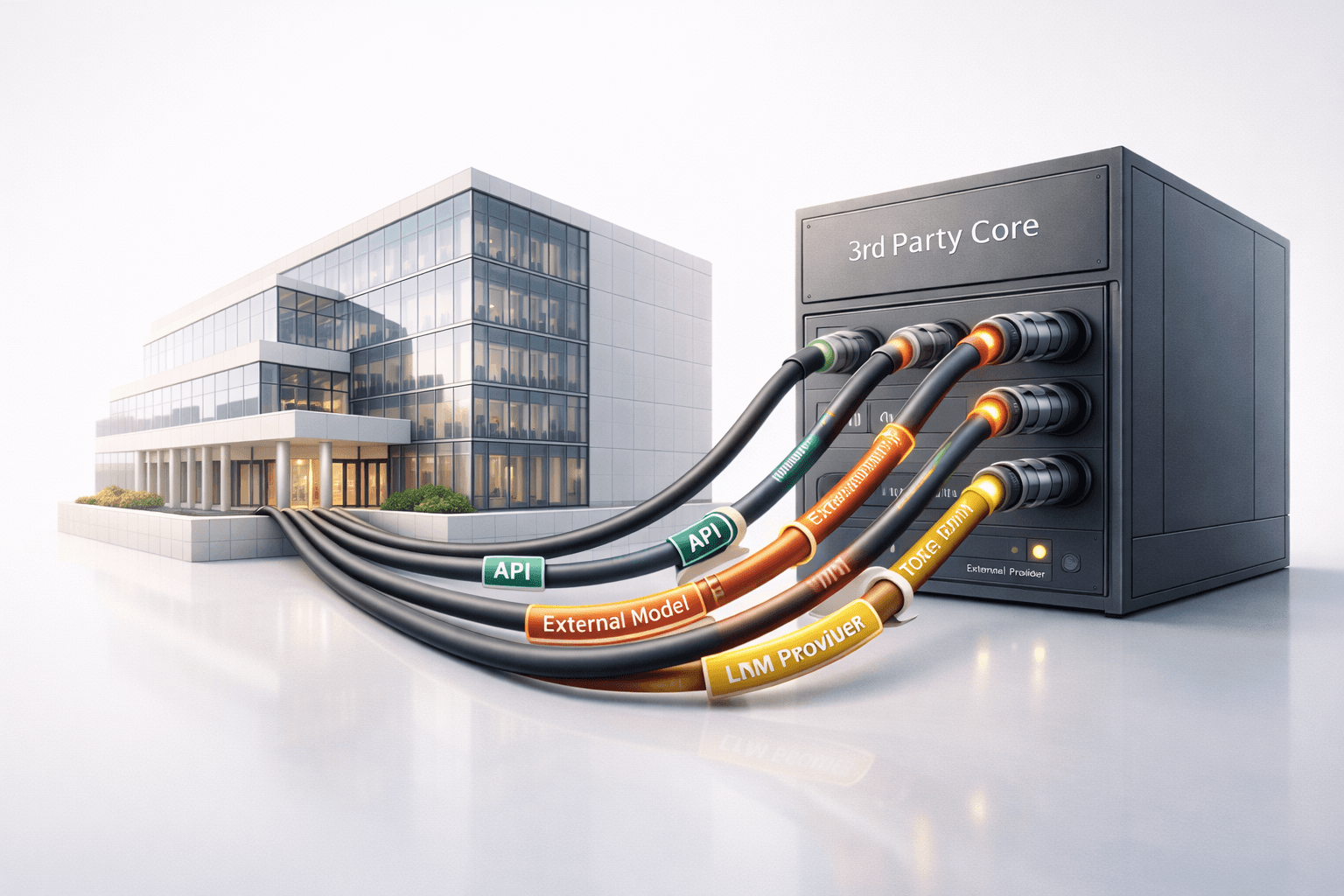

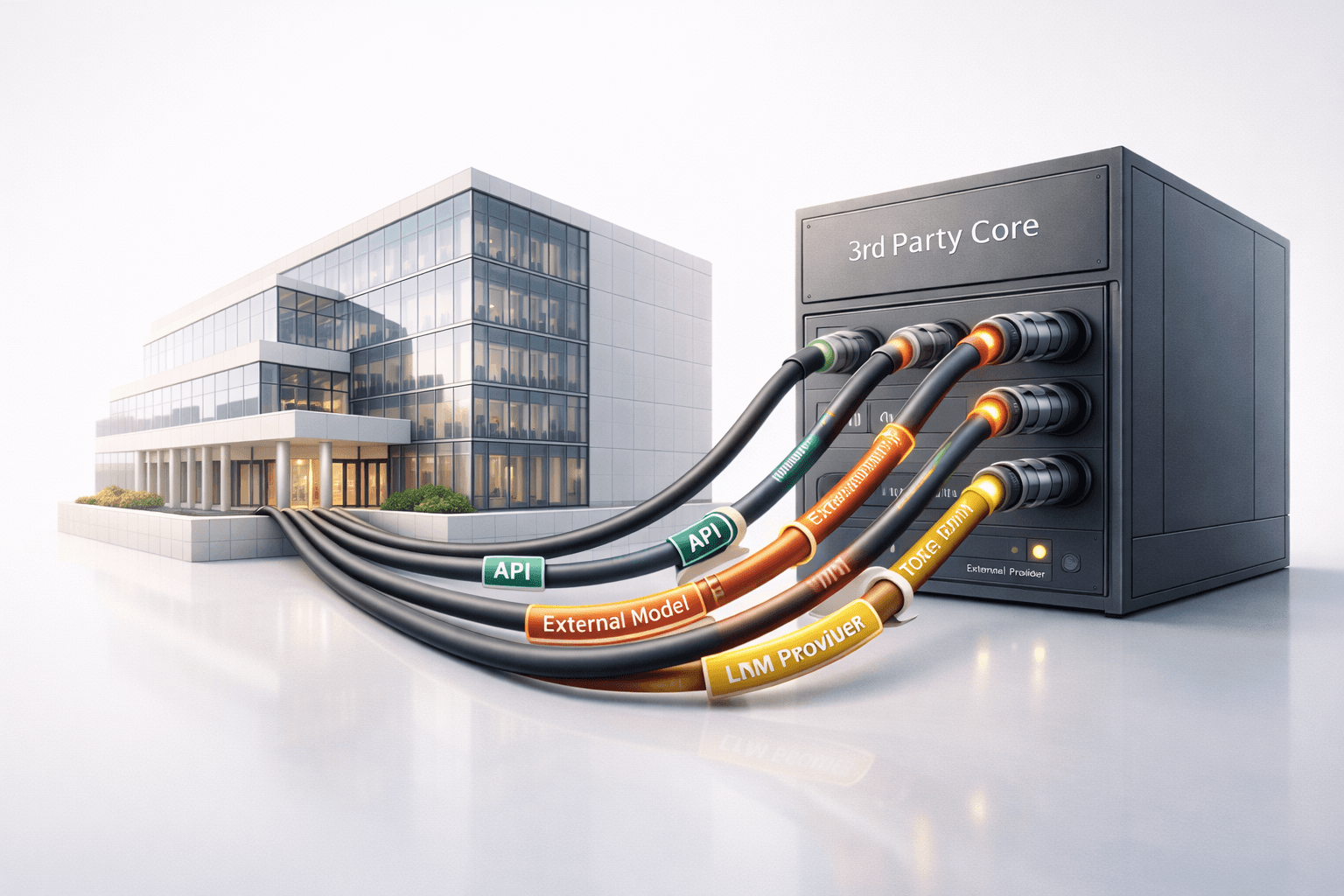

Many enterprise AI stacks appear modular on paper. A typical architecture includes a user interaction layer (chat or voice), an orchestration middleware, an external model API call, post-processing logic, and system updates. This modularity creates the perception of flexibility.

In practice, the reasoning core remains external.

Organizations frequently describe their systems as “model-agnostic.” However, switching providers often entails reengineering prompts, recalibrating thresholds, and retesting integration logic. Behavioral differences between GPT-family models, Claude-series models, and Gemini variants are non-trivial in production environments.

In cloud infrastructure, application logic remains internal while compute is outsourced. In AI-dependent systems, reasoning logic is partially externalized.

That changes control boundaries fundamentally.

Comparing Cloud Lock-In and Model Lock-In

Cloud lock-in typically centers on infrastructure tooling: proprietary storage services, serverless frameworks, or managed databases. Enterprises can replicate or migrate these with sufficient engineering investment.

Model lock-in is qualitatively different. Training frontier models requires capital and compute scale unavailable to most enterprises. Even deploying advanced open-weight models internally demands GPU clusters, inference optimization, and operational MLOps capabilities that many organizations do not maintain.

In cloud environments, data remains the enterprise’s primary asset. In model-dependent environments, reasoning capacity becomes an externalized asset.

The leverage dynamic shifts accordingly.

What a Real AI Strategy Requires in 2026

An AI strategy in 2026 cannot be defined by API integration alone. It must address structural sovereignty.

This includes establishing explicit abstraction layers between orchestration logic and model calls, implementing multi-provider fallback mechanisms, continuously monitoring behavioral drift, and defining clear execution boundaries for AI-initiated actions. It also requires scenario modeling: what happens if pricing increases by 30%, if inference latency degrades regionally, or if regulatory constraints alter permissible data flows?

Most importantly, enterprises must decide which components of cognitive capability are core intellectual assets and which are outsourced utilities.

If AI mediates customer communication, processes contracts, or triggers financial transactions, it is not peripheral software. It is part of the execution layer of the organization.

Execution layers demand resilience planning equivalent to financial systems or network infrastructure.

Conclusion: Dependency Is Not Strategy

Between 2023 and 2025, rapid AI adoption created competitive acceleration. In 2026, structural dependencies are visible.

Cloud dependency reshaped enterprise IT over a decade. Model dependency is reshaping enterprise control within three years.

Organizations that mistake API consumption for strategic capability risk embedding outsourced cognition at the core of their operations without architectural safeguards. A real AI strategy must define ownership boundaries, substitution capacity, governance integration, and geopolitical resilience.

Without those elements, what appears to be innovation may simply be dependence—scalable, efficient, and strategically fragile.

In 2023, integrating generative AI meant experimentation. By 2024, it meant copilots embedded in productivity tools. By 2025, it meant large-scale deployment of external model APIs across customer communication, internal automation, analytics, and workflow orchestration. By early 2026, a structural reality has emerged: many enterprises that describe their approach as an “AI strategy” are, in fact, structurally dependent on a small number of external model providers for core operational reasoning. That is not strategic autonomy. It is infrastructural reliance.

The difference is not semantic. It defines control.

From Cloud Lock-In to Model Lock-In

Enterprise IT has navigated concentration risk before. Between 2010 and 2020, public cloud adoption consolidated heavily around Amazon Web Services, Microsoft Azure, and Google Cloud. According to Synergy Research Group, by 2025 these three hyperscalers controlled roughly two-thirds of global cloud infrastructure revenue. Enterprises responded with multi-cloud strategies, redundancy planning, and sovereign data architectures. Even so, cloud migrations required years of architectural adaptation.

AI has moved through consolidation faster.

By Q4 2025, IDC estimated that more than 70% of mid-to-large enterprises using generative AI relied primarily on third-party model APIs rather than self-hosted or proprietary models. In practical terms, that means reasoning—the cognitive core of digital workflows—was outsourced to vendors such as OpenAI, Anthropic, Google DeepMind, and, in specific regions, Baidu or Alibaba Cloud.

Cloud providers sell compute capacity. Model providers sell inference and reasoning. The latter is harder to replicate and more difficult to substitute.

Training frontier-scale models in 2025 required capital expenditures measured in billions of dollars. OpenAI’s GPT-4 training was estimated by independent analysts to cost in excess of $100 million in compute alone. Google DeepMind’s Gemini training cycles involved comparable infrastructure investments. Even fine-tuning and hosting open-weight models at enterprise scale require GPU clusters that few organizations maintain internally.

As a result, dependency shifts from infrastructure tooling to cognitive infrastructure.

The Pricing Reality: Volatility at Scale

API dependency is not theoretical. It is measurable in cost structures.

In late 2025, several large model vendors adjusted pricing tiers for high-volume inference customers. While official statements framed changes as optimization of resource allocation, enterprise clients operating high-frequency AI workloads reported effective cost increases ranging between 20% and 35% in specific usage brackets, particularly in multilingual and long-context deployments.

For organizations using AI in voice-based customer communication, token consumption scales rapidly. A customer support center handling 500,000 voice interactions per month—each involving real-time transcription, contextual reasoning, and response generation—may consume tens of millions of tokens daily. A marginal increase in token pricing directly translates into six- or seven-figure annual cost adjustments.

Unlike SaaS pricing, which is typically predictable within annual contracts, LLM API pricing structures remain dynamic. Rate limits, context window pricing, and premium feature tiers evolve. Enterprises often adapt post-factum.

This is not budgetary variance. It is structural exposure.

Latency and Degradation: Operational Risk at the Edge

Beyond pricing, performance variability introduces risk.

In January 2026, several European enterprises reported temporary inference latency spikes when routing API calls to external model providers during peak demand windows. While these were not full outages, response times exceeding 1.5 seconds were observed in certain geographies. In conversational systems—particularly voice AI where natural interaction requires sub-500 millisecond responsiveness—latency degradation has immediate business impact.

According to internal analytics shared by a large European e-commerce platform during an industry roundtable in February 2026, a conversational latency increase from 400 milliseconds to 1.2 seconds correlated with a 9–12% drop in session continuation rates. In high-volume customer communication environments, this directly affects conversion and retention.

When AI mediates customer communication, degradation equals lost revenue.

Enterprises frequently architect orchestration layers assuming consistent inference performance. Few implement robust multi-provider failover logic with identical behavioral guarantees. In practice, switching providers during latency events requires prompt recalibration and regression testing—processes that are not instantaneous.

This is not a tooling inconvenience. It is a real-time operational dependency.

Model Drift and Behavioral Variability

Another under-discussed dimension of API dependency is behavioral drift.

Frontier model vendors regularly release updates to improve safety, reasoning coherence, multilingual support, or instruction adherence. These updates are typically beneficial. However, subtle shifts in output structure or classification reliability can cascade through enterprise automation pipelines.

In early 2026, a multinational retail group attempting to optimize costs migrated part of its AI workload from one provider to another. The transition required over twelve weeks of prompt re-engineering and workflow recalibration due to differences in response formatting and edge-case handling. Intent classification variance in multilingual contexts required retraining internal validation datasets.

Model interchangeability is conceptually attractive. Operationally, it is expensive.

Unlike databases or containerized workloads, reasoning systems embed probabilistic behavior that influences downstream logic. A minor shift in classification confidence thresholds may alter escalation flows or trigger incorrect workflow routing.

Most enterprises budget for infrastructure redundancy. Few budget for continuous model behavior revalidation.

Regulatory and Sovereignty Exposure

AI dependency is also geopolitical.

In 2025 and early 2026, enforcement mechanisms around the European Union AI Act intensified, particularly regarding high-risk systems and transparency requirements. Simultaneously, data localization frameworks expanded in parts of Asia-Pacific and the Middle East. For enterprises operating across jurisdictions, model selection now intersects with regulatory compliance.

If a company relies exclusively on an external LLM API hosted in limited geographic regions, changes in cross-border inference policies may require architectural restructuring. Cloud compliance strategies addressed data residency. AI compliance must address reasoning residency—where inference occurs and under what legal frameworks.

In regulated sectors such as financial services, audit continuity becomes more complex when model vendors update architectures without granular transparency. If reasoning logic shifts and documentation generation pipelines are built on external APIs, compliance teams must revalidate outputs without access to underlying weights or training data.

This creates a governance asymmetry.

Enterprises embed AI in revenue-generating and compliance-critical workflows. Model vendors retain unilateral update authority.

The Architecture Illusion

Many enterprise AI stacks appear modular on paper. A typical architecture includes a user interaction layer (chat or voice), an orchestration middleware, an external model API call, post-processing logic, and system updates. This modularity creates the perception of flexibility.

In practice, the reasoning core remains external.

Organizations frequently describe their systems as “model-agnostic.” However, switching providers often entails reengineering prompts, recalibrating thresholds, and retesting integration logic. Behavioral differences between GPT-family models, Claude-series models, and Gemini variants are non-trivial in production environments.

In cloud infrastructure, application logic remains internal while compute is outsourced. In AI-dependent systems, reasoning logic is partially externalized.

That changes control boundaries fundamentally.

Comparing Cloud Lock-In and Model Lock-In

Cloud lock-in typically centers on infrastructure tooling: proprietary storage services, serverless frameworks, or managed databases. Enterprises can replicate or migrate these with sufficient engineering investment.

Model lock-in is qualitatively different. Training frontier models requires capital and compute scale unavailable to most enterprises. Even deploying advanced open-weight models internally demands GPU clusters, inference optimization, and operational MLOps capabilities that many organizations do not maintain.

In cloud environments, data remains the enterprise’s primary asset. In model-dependent environments, reasoning capacity becomes an externalized asset.

The leverage dynamic shifts accordingly.

What a Real AI Strategy Requires in 2026

An AI strategy in 2026 cannot be defined by API integration alone. It must address structural sovereignty.

This includes establishing explicit abstraction layers between orchestration logic and model calls, implementing multi-provider fallback mechanisms, continuously monitoring behavioral drift, and defining clear execution boundaries for AI-initiated actions. It also requires scenario modeling: what happens if pricing increases by 30%, if inference latency degrades regionally, or if regulatory constraints alter permissible data flows?

Most importantly, enterprises must decide which components of cognitive capability are core intellectual assets and which are outsourced utilities.

If AI mediates customer communication, processes contracts, or triggers financial transactions, it is not peripheral software. It is part of the execution layer of the organization.

Execution layers demand resilience planning equivalent to financial systems or network infrastructure.

Conclusion: Dependency Is Not Strategy

Between 2023 and 2025, rapid AI adoption created competitive acceleration. In 2026, structural dependencies are visible.

Cloud dependency reshaped enterprise IT over a decade. Model dependency is reshaping enterprise control within three years.

Organizations that mistake API consumption for strategic capability risk embedding outsourced cognition at the core of their operations without architectural safeguards. A real AI strategy must define ownership boundaries, substitution capacity, governance integration, and geopolitical resilience.

Without those elements, what appears to be innovation may simply be dependence—scalable, efficient, and strategically fragile.

Ready to transform your customer calls? Get started in minutes!

Automate call and order processing without involving operators